Add A Robots.txt File To Your Next.js Website

The robots.txt file indicates whether certain user agents (i.e. web-crawler robot software) can or can't crawl certain parts of your website. These instructions are determined by "allowing" or "disallowing" the behavior of individual or all user agents (i.e. types of robots).

It is a plain-text file and lives at the root of your website. For an example site with a base URL of www.example.com, the robots.txt file will live at the www.example.com/robots.txt URL.

To add a robots.txt file to your Next.js website, all you need to do is add the file to your /public directory.

When you place a file inside the /public directory, it will be served at the root URL of your website. So, this file will be accessible at www.example.com/robots.txt in the browser.

Let's go over each step to get the robots.txt file added to your Next.js website.

On your development machine, navigate to your Next.js project directory.

If you haven't already created a /public folder, create one in the root of your project's directory with this command:

mkdir public

Then, create a new file in the /public directory called robots.txt:

cd public && touch robots.txt

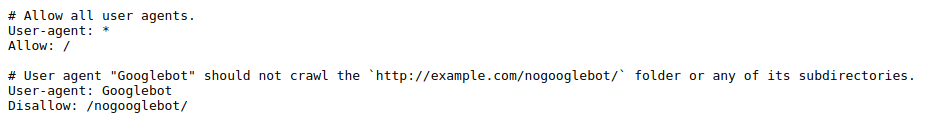

After that, open the new /public/robots.txt file in your code editor and add this code to it:

# Allow all user agents.

User-agent: *

Allow: /

# User agent "Googlebot" should not crawl the `http://example.com/nogooglebot/` folder or any of its subdirectories.

User-agent: Googlebot

Disallow: /nogooglebot/

This code contains a few example rules you can add to your robots.txt file.

Save the changes you made to the robots.txt file and open your browser window to http://localhost:3000/robots.txt.

You should see the contents of your robots.txt file displayed on the page.

Then, you need to deploy this change to production to make your robots.txt file accessible to the world.

When you've finished your deployment process, visit your website in a browser and verify that the robots.txt file is served at this URL: https://example.com/robots.txt.

Your robots.txt file is now created and configured in your Next.js website.

Thanks for reading and happy coding!